Talk with your Codebase: Beyond Agentic Coding

topics: AI, Product, Development, Agentic Coding

Talking with my codebase saves me so much time, and I want to share a concrete example. There's a lot of debate about AI-generated code, and AI-generated anything, but I think it's missing the big picture of speeding up workflows. Even if you don't ship anything AI-generated, you can use AI-generated scripts to speed up the discovery and research and iteration process.

My pet project: Habit.am

Habit is a wordless journaling app. It has some paid users but it's still in the side-project category for me. My goal is to tweak it until it creates so much value people share it out organically. That isn't happening yet, so it's a great place to be, with a lot of potential for learning. It's a challenging/tricky market, but it's been a labor of love. Can I take this thing, journaling, and make it easy to be consistent with? It's an interesting challenge so it's always pushing me to think in new ways.

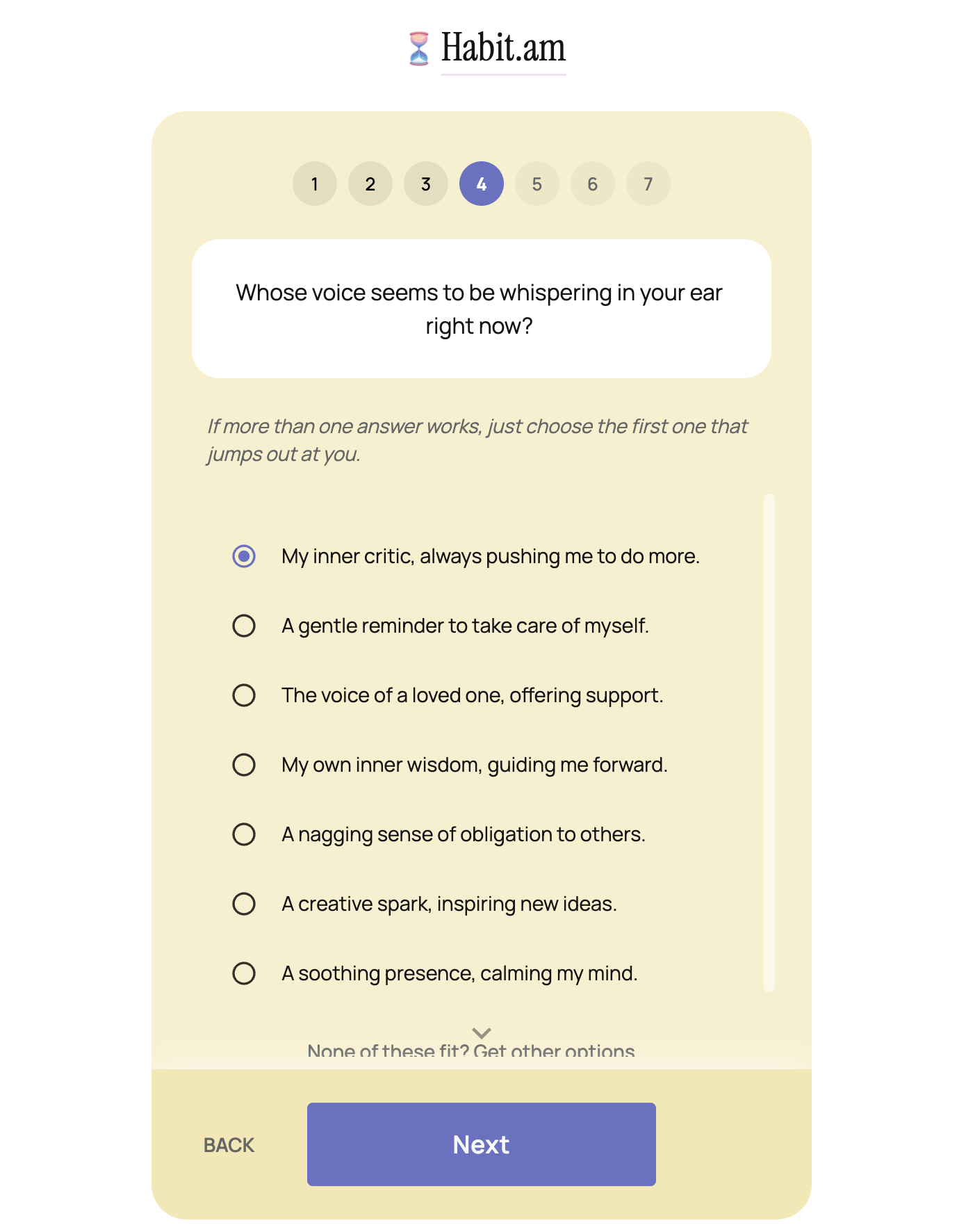

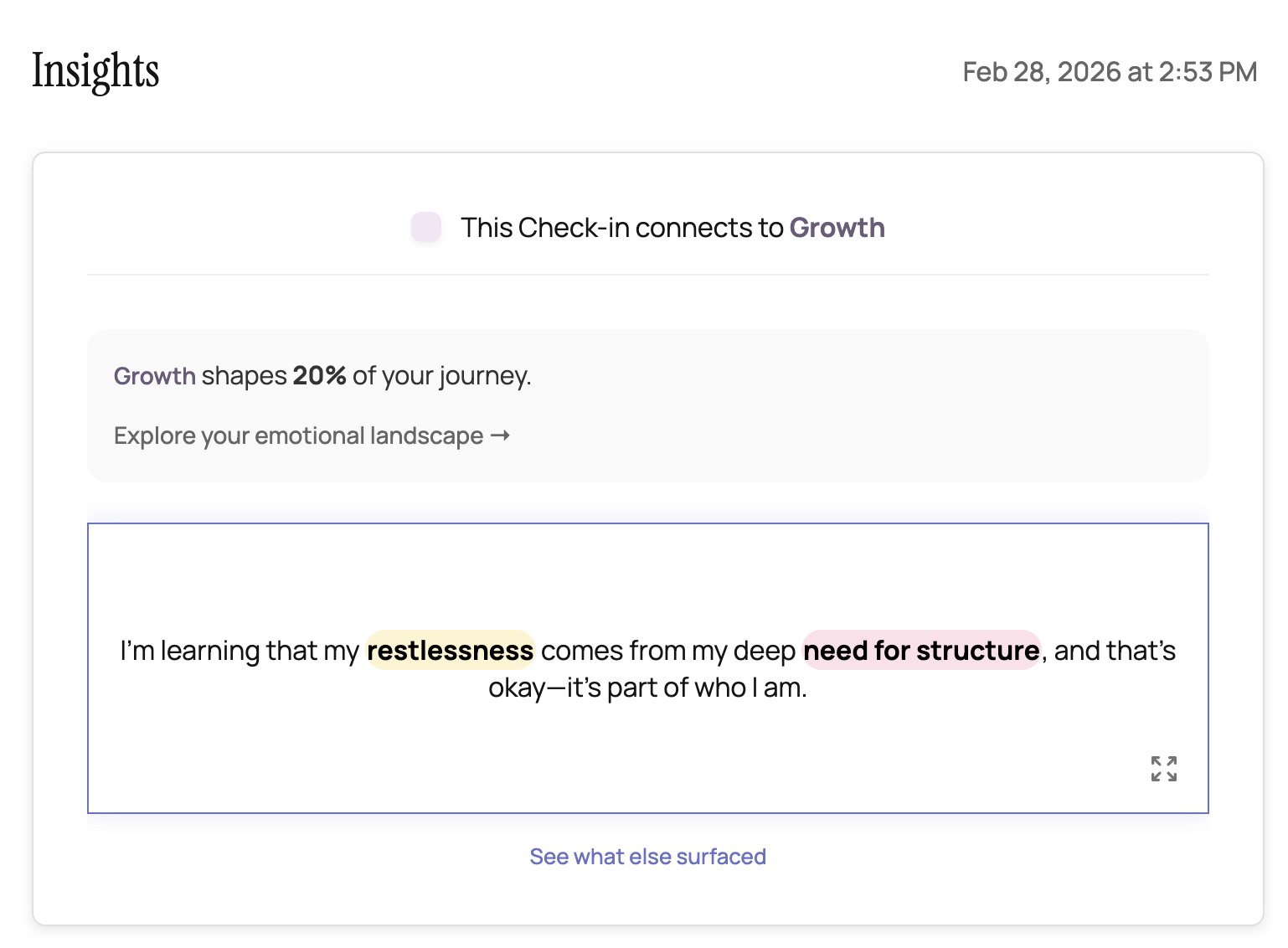

The current version is a tool I use myself for emotional regulation. It guides you through a short check-in (like a quiz) about how you're feeling and why, digging deep for 7 questions, and at the end you get an AI-generated insight. It's cute, it's light, and on hard days, it's a lifesaver.

The quiz starts by selecting a random question from a pre-generated set I created. Some ask you how you're feeling, some give you a list of emotions to pick from, and some ask you to think about your "battery level."

I decided to talk with my codebase about these questions because we've gone through several iterations.

My first prompt was very instructive, but I knew I was just thinking about this, so I stuck to "Ask mode." Ask helps you understand the context and sometimes it helps me organize my own thoughts before giving clear instructions.

[Ask Mode] I want to revert the "initial" questions to what they were before. they were better.

My AI buddy went through my git history and found a set of previous questions we used. But they weren't the ones I liked.

[Ask Mode] no i think the good questions were even further back

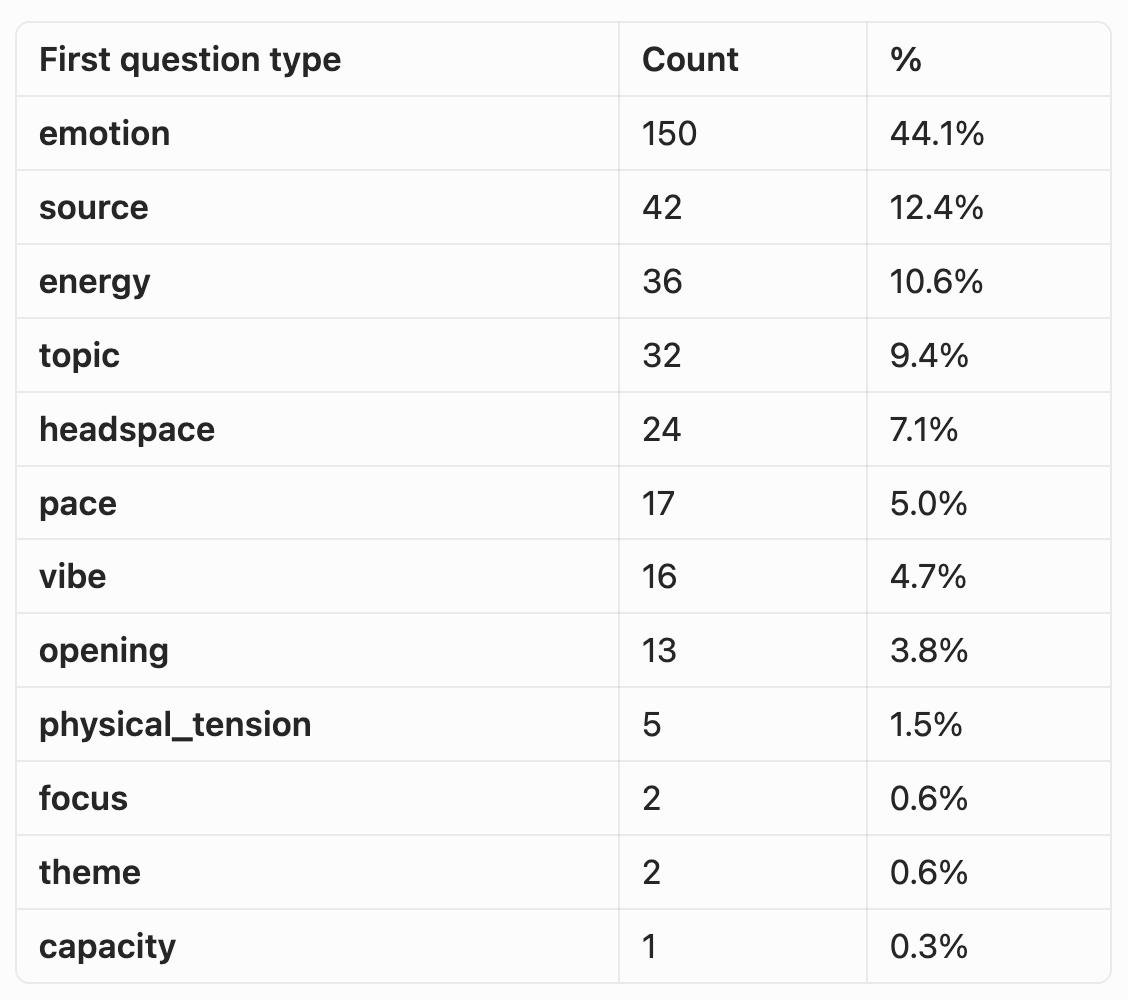

It found the ones I liked, but it found a bunch more. I realized that I wasn't really sure which ones were the best. I had a gut feeling from using my own product. I thought, "well, which ones were so good that people finished the experience?" If they are picked at random, and they are equal in quality, we should have an even distribution (but I bet we don't.) I knew personally I was more likely to complete a check-in if an easy-to-answer question was first.

[Ask Mode] can you look at the checkin data in firebase and figure out which of these initial questions led to more completed checkins historically?

I then gave it some guidance about my Database, and how some of the older data had been moved to a collection I renamed with _old at the end. So we talked a bit until the script was good. Then we proceeded:

[Agent Mode] write that node script to run the analysis

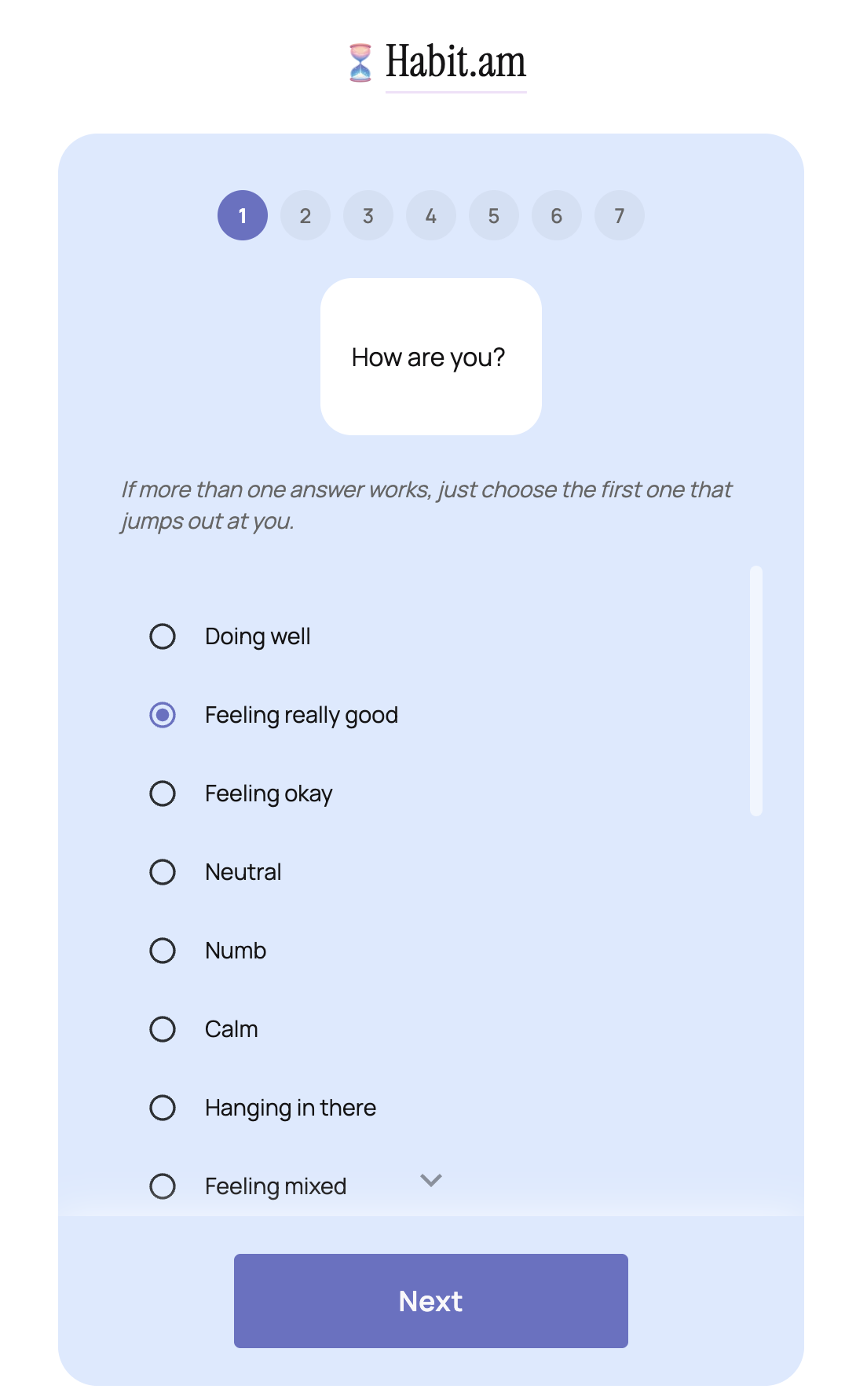

The result showed something I suspected: the easy "How are you feeling?" question was more likely to not have drop offs. I went back and forth with my AI Buddy to make sure the data sourcing was correct, doing some sense-checking on the data, returning it in different formats (count instead of percent) and poking holes to make sure it meant what I thought it meant. I also filtered the data by when only the original 5 questions were available (and connected it to a particular commit/deployment), and we saw that How are you feeling outperformed the 2nd best question by 3x.

The next step was clear:

[Agent Mode] Lets make the initial question always this one:

"How are you?" (old starter from the pre-refactor journal) used a fixed list of phrases, e.g.:

"Doing well", "Feeling really good", "Feeling okay", "Neutral", "Numb", "Calm", "Hanging in there", "Feeling mixed", "Feeling some intense feelings", "Having a tough time", "Overwhelmed", "Feeling incredible", "Not sure", "Something else".

let's remove "something else" as an option and add a few more options if they request them.

Then I had to review, test, tweak, make sure the additional options sounded good and the list was comprehensive. The big win today wasn't that it wrote the code to change the initial question, it was that together we were able to look at how the app was wired (historically) and how it performed in regard to this specific feature during different versions.

Another day, another small improvement, and a big insight I got from just speaking with my codebase. The workflow win isn't even that the AI wrote the analysis script for me (as well as 12 additional ones I requested to sense-check the data) but that I didn't have to context switch between thinking about which question performed best and writing a script, looking at the database, looking at the dates on my git commits. It was able to translate all these things that are dated into a coherent timeline and the data alongside it.

About The author

I spend a lot of time thinking about (software and business) problems - sometimes I get around to writing about it, and you get to read about it here.