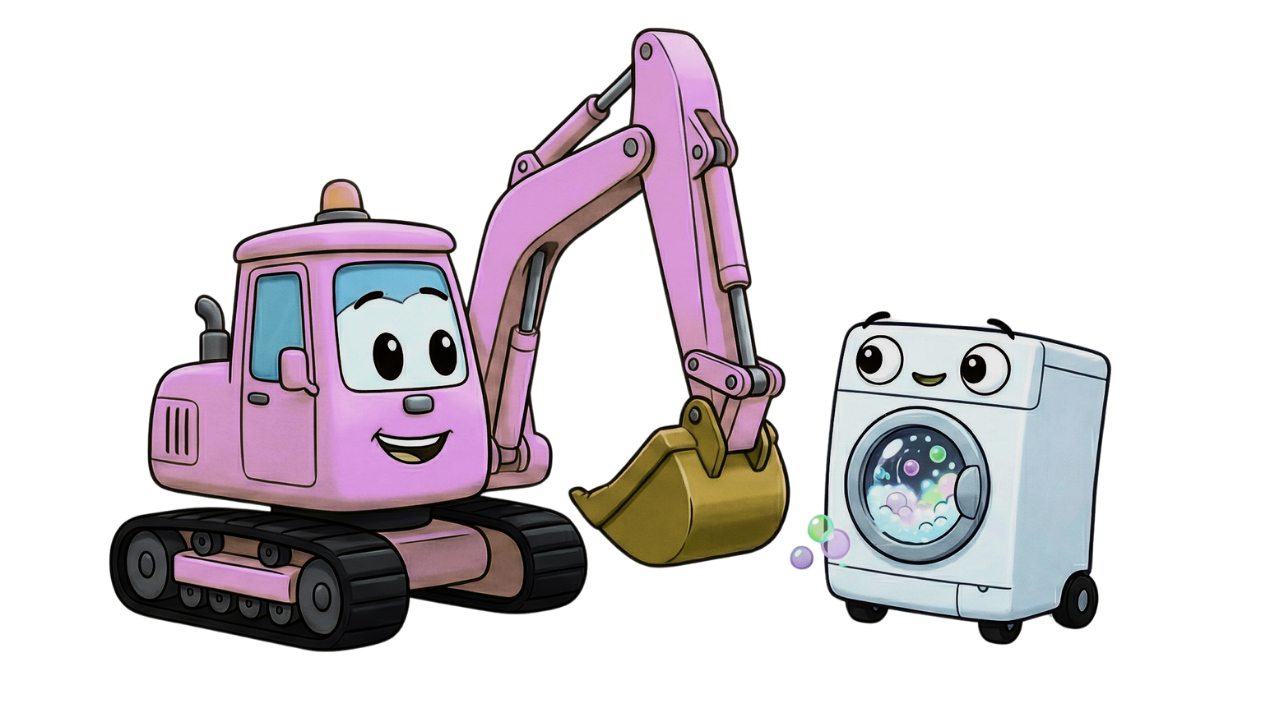

Why your AI agent should be an excavator, not a washing machine

topics: AI, Product, Design, Philosophy

Disclaimer: I used AI to enhance the clarity and structure of my writing, but the majority of this post was written by hand.

Right now, the prevailing narrative around agentic AI is one of effortless, hands-off autonomy. But as I've used agents more and more, I think autonomy is the wrong goal. I previously wrote that "Agentic AI" is a trap entirely - but my criticism wasn't with Agents, but with the idea of completely autonomous agents.

In this post, I want to dig deeper into the issue of autonomy.

Some agents are like washing machines. You set a few settings: temperature, spin speed, pre-wash. Then, you let them run. They make a pleasant ding when they're done. Your only job is to move the result into the dryer. It is a highly bounded, predictable transaction.

Other agents are like excavators. They are fundamentally different beasts: extremely powerful, capable of reshaping landscapes in minutes, and because of that power, they must always be supervised.

But, here's the thing: Tech companies are selling us excavators and telling us to treat them like washing machines.

Tools like Openclaw have challenged the traditional "human in the loop" with features like the heartbeat that triggers the agent to take actions and make decisions autonomously. This, alongside a lot of the Claude marketing has pushed the narrative that human oversight is optional. A lot of agentic AI messaging revolves around the dream of "running the agent while you sleep" and waking up to a finished product.

Anyone who has actually spent time in the trenches with these tools knows how easily, and how confidently, they make mistakes. And it's not just because you're using the wrong model - things like context poisioning can affect even the most powerful models. The human-out-of-the-loop idea is never going to work for powerful use cases.

When you are in the loop, it is incredibly easy to point out a mistake, correct the logic, and set the AI back on track. But if it's running overnight with zero supervision, compounding its own errors without a sanity check, it's highly likely you'll end up in a really strange and broken place by morning. As researchers noted in the 2023 paper "How Language Model Hallucinations Snowball" (Zhang et al.), once an AI commits to a mistake early in a chain of thought, it will stubbornly hallucinate further justifications to support it.

What I've found is that even when I try to steer an agent in the right direction, it sometimes takes multiple cycles of prompting to have it give up it's confidently wrong belief.

You can't leave an excavator running overnight, but if you could, you would wake up to massive destruction most of the time.

That is why "human in the loop" isn't just a convenient principle for people who are just cautious of AI. It is essential for those of us on the forefront of leveraging AI to its maximum capability.

The more powerful the tool is, the more crucial the presence (and training) of the human operator becomes.

The Washer vs. The Excavator

To understand why, we have to look at how the design paradigms for these two types of tools differ.

| Feature | The Washer | The Excavator |

|---|---|---|

| Environment | Closed, highly constrained, predictable. | Open-ended, complex, highly variable. |

| Error Cost | Low: Your clothes are still a bit damp. Or your wool has shrunk (user error.) | High: You can damage your or others' property. |

| Input Style | Set and forget. | Continuous steering. |

| Role of the Human | Loading - Basic skills needed, but nothing specalized. | Expert Operator - Requires specialized skills |

| Training required to use | Asking ChatGPT how to operate it. | Theoretical and practical courses covering: Safety procedures, Excavation and trenching hazards, Load handling and stability, Emergency response protocols. |

The operator is a fundamental design pattern in AI products

When we strip away the marketing, the reality of agentic design comes into focus. In Andrew Ng's extensive writings on Agentic Design Patterns (2024), he emphasizes that human-in-the-loop workflows aren't a temporary crutch while we wait for AGI; they are a fundamental design pattern for reliable AI.

When a product is designed to be an excavator, its user interface and user experience should reflect that. It should invite the user to grab the levers, to pause, to inspect the progress, and to course-correct. We see this emerging in some agentic coding tools that allow you to stop the current process, reject changes partially, steer the current action and queue actions with different capability levels (ask, agent, plan).

In her comprehensive piece "LLM Powered Autonomous Agents" (2023), OpenAI's Lilian Weng breaks down how agents use memory and planning. She flags that a weakness of plans is that LLMs "struggle to adjust plans when faced with unexpected errors, making them less robust compared to humans who learn from trial and error."

The opposite of autonomy is heteronomy

The idea of total autonomy and empowerment (for the agent) is a dangerous goal to aim for. Agents should be governed. This is called heteronomy.

We are trying to build pretty powerful tools, but the autonomous agent framing locks us into weaker and more fragile products by denying them the one thing they will never have but desperately need: human judgment. An AI product shouldn't just be measured by how completely it removes the human from the task, but by how effectively it empowers the human to do more than the human, on their own, could ever do.

"The agent does your job while you sleep" might grab your attention on social media, but "The agent empowers you to expand the reach of what your judgement and taste can impact" is, in my opinion, a more meaningful, resilient and rewarding product goal.